Terraform with AWS: A Beginner’s Practical Guide

Terraform is an open-source Infrastructure as Code (IaC) tool by HashiCorp that lets you define and manage cloud infrastructure using human-readable configuration files. In practice, this means you can use simple text files to describe all your AWS resources (servers, networks, databases, etc.) and let Terraform create, update, or delete them automatically. The global IaC market is booming – valued at over $1 billion in 2024 – as organizations increasingly adopt tools like Terraform to automate and version-control their infrastructure. By pairing Terraform with AWS (a leading cloud platform), beginners can quickly provision and manage AWS services in a repeatable, consistent way.

Why Use Terraform with AWS?

Using Terraform with AWS combines the power of a declarative IaC tool with AWS’s vast cloud offerings. Key advantages include:

-

Declarative, human-readable configs: You declare what resources you want (e.g. “an EC2 instance in us-east-1”), and Terraform figures out how to create them. The configuration uses HashiCorp’s HCL language, which is easy to read and write.

-

Multi-cloud and AWS support: Terraform works with virtually every major cloud provider. For AWS, it can automate almost any resource – EC2 instances, S3 buckets, RDS databases, VPCs, Lambda functions, and more. In fact, as of May 2024 the Terraform with AWS provider supports 1366 resource types and 557 data sources, covering nearly all AWS features.

-

Version control & collaboration: Terraform configurations are plain text. You can store them in Git (or any VCS) so teams can collaborate, review changes, and track history. This brings software-style versioning to your infrastructure.

-

Plan and preview changes: Before applying any changes, Terraform’s

plancommand lets you preview exactly what will happen. This “dry run” helps catch mistakes early and ensures team members agree on changes. -

Safe idempotency with state: Terraform keeps a state file that maps your configuration to real AWS resources. This state lets Terraform know what already exists, so it only makes the necessary changes. You get idempotent, repeatable deployments every time.

-

Automation and consistency: Once written, the same Terraform code can create dev, test, or prod environments. Everything is automated and consistent. A recent summary notes that this approach “ensures consistency and repeatability” in infrastructure deployments– a core DevOps best practice.

Put together, these features make Terraform an ideal tool for beginners who want a hands-on, code-driven way to manage AWS infrastructure. You’re essentially treating infrastructure like software: writing code, reviewing changes, and automating deployments.

Getting Started: Prerequisites for Terraform with AWS

Before writing any Terraform, make sure you have:

-

AWS Account and Credentials: You need an AWS account. In AWS IAM (Identity & Access Management), create a user (or use an existing one) with permissions to create the resources you need (e.g. EC2, S3, etc.). Then configure credentials on your machine:

-

AWS CLI: Install the AWS CLI and run

aws configure. Enter the IAM user’s Access Key ID, Secret Access Key, default region, and output format (JSON, etc.). This creates~/.aws/credentialsand~/.aws/configfiles. -

Environment Variables: Alternatively, export your keys as environment variables:

Terraform (and AWS SDKs) will pick these up automatically.

-

-

Terraform CLI: Download and install the Terraform binary (v1.5+ is recommended) from HashiCorp’s site. Confirm it’s installed by running

terraform version. -

Text Editor/IDE: Any editor will do. Terraform files have a

.tfextension.

Once AWS credentials are set and Terraform is installed, you’re ready to write a configuration.

Writing Your First Terraform Configuration

Terraform configurations are usually placed in files with a .tf extension (commonly main.tf, variables.tf, etc.). In AWS projects, you start by specifying the AWS provider and then defining AWS resources. Here’s a simple example in a file called main.tf:

This configuration does the following:

-

Provider block: Tells Terraform we’re using AWS and sets the region (here,

us-east-1). Terraform will download the AWS provider plugin on initialization. -

Resource block: Defines an

aws_instancenamedexample. We specify the Amazon Machine Image (AMI) ID and the instance type (t2.microfor free tier). When applied, this creates a new EC2 instance in your AWS account.

You can adapt this example to other resources. For instance, to create an S3 bucket, your config might include a resource "aws_s3_bucket" block with a bucket name and settings. (See more examples in official docs or tutorials.) The key idea is: everything you want to create in AWS has a Terraform resource you can configure.

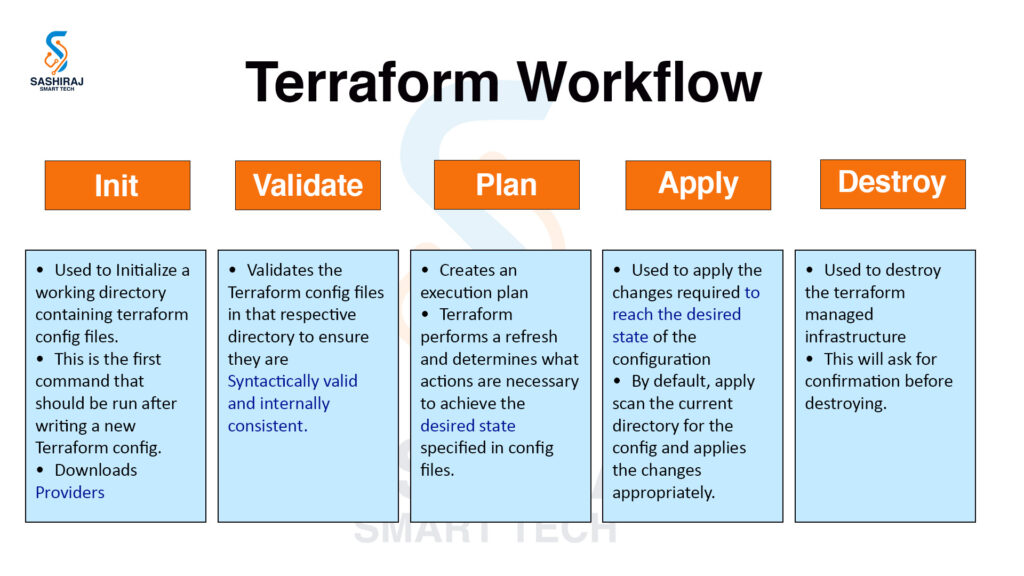

Terraform with AWS Workflow: Init, Plan, Apply, Destroy

Once your .tf files are ready, use Terraform’s CLI to enact them. The basic workflow has three steps:

-

Initialize

Run:terraform init

This command downloads the required provider plugins (AWS, etc.) and sets up your working directory. It creates a hidden.terraformfolder and a lock file (.terraform.lock.hcl) to track plugin versions. Initialization only needs to be done once per directory (or again if you change providers/modules). -

Plan (Preview changes)

Run:terraform plan

Terraform reads your configuration and compares it to the current state. It then shows you a summary of actions (e.g. “+ create resource” for new resources) that it will perform if applied. This step is critical for validation: you can review what Terraform plans to do before making any changes, which helps prevent mistakes.For example, the plan output will show something like:

This confirms Terraform will create one EC2 instance as specified.

-

Apply (Make changes)

Run:terraform apply

After reviewing the plan, this command actually provisions the resources on AWS. Terraform will prompt you to confirm (typeyes). It then creates the EC2 instance (or other resources). This step may incur AWS costs (especially on non-free-tier resources), so be sure you really want to apply. Once applied, Terraform updates its local state file (terraform.tfstate) to record the real-world IDs and attributes of the created resources. -

Destroy (Clean up)

When you no longer need the resources, run:terraform destroy. Terraform will again show a plan (with- deleteactions) and ask for confirmation. Typingyeswill delete all resources defined in your config. This is how you tear down your infrastructure in one shot. It’s a good way to ensure you don’t leave stray resources running (and incurring costs) after experiments.

Throughout this workflow, remember:

-

You can re-run

terraform plan/applyanytime after editing the config to make incremental changes. -

The

terraform.tfstatefile is how Terraform knows what exists. Never delete it unless you want to “forget” the resources. (For teams, you can use remote state backends like S3, but that’s advanced.) -

You should check your AWS console or CLI to see the actual resources being created. For example, after

apply, you’ll see your new EC2 instance under the EC2 dashboard.

Key Terraform Commands

Some other useful Terraform commands:

-

terraform refresh: updates the state file to match real-world resources. -

terraform show: displays the state or plan in a human-readable format. -

terraform validate: checks the syntax and validity of your configuration without contacting any cloud. -

terraform fmt: formats your.tfcode to a canonical style. -

terraform fmt -check: returns an error if files are not formatted. -

terraform graph: (advanced) shows the resource dependency graph.

However, init, plan, apply, and destroy are the core commands for beginners.

Example: Deploying an EC2 Instance

Let’s walk through a concrete example of deploying an EC2 instance in Terraform with AWS:

-

Write configuration: Using the

main.tffrom above (provider +aws_instance). -

Initialize:

terraform initdownloads the AWS provider plugin (e.g.hashicorp/aws v5.0.0). -

Plan:

terraform planconfirms Terraform will create 1 EC2 instance. -

Apply:

terraform apply– after confirmation, the EC2 instance is created in AWS. -

Verify: Check the AWS EC2 console (you should see the new instance running).

-

Destroy: When done,

terraform destroyto delete the instance.

This simple cycle (init → plan → apply → verify → destroy) shows how quickly you can go from code to running infrastructure. You could expand this same config to add more resources – for example, a security group, an EBS volume, or tagging the instance – and simply re-run plan and apply to update the infrastructure. Terraform will only create the new parts, leaving existing resources intact (unless you delete them from the code).

Pro Tip: After

terraform init, Terraform creates two important files:

.terraform.lock.hcl– locks the provider plugin version for repeatable builds. Include this file in version control so your team uses the same plugin versions.

terraform.tfstate– keeps the state of your deployed infra. By default it’s stored locally, but for real projects you can configure a remote state backend (like an S3 bucket) to share state across your team.

Best Practices and Tips

-

Use variables: Don’t hard-code values like AMIs or instance types. Use variables in

variables.tfandterraform.tfvarsto make your configurations reusable and configurable. -

Name resources clearly: Give meaningful names to your Terraform resources (the labels in quotes). These names appear in state and in plan outputs.

-

Version your configurations: Keep your

.tffiles in Git. This way, you can track changes, perform code reviews, and roll back if needed. -

Start small: Begin with one resource (like an EC2 or S3) to understand the process. Then gradually add more (networks, databases, etc.).

-

Leverage modules: For larger projects, organize common patterns into Terraform modules (reusable components). The Terraform Registry has many community and official modules (for example, a VPC module).

-

Check AWS limits: Remember AWS often has region limits (e.g. max 20 EC2 instances). Terraform will fail if you exceed those, so ensure your configs respect AWS quotas.

-

Clean up resources: Always run

terraform destroy(or remove resources from config and re-apply) when you’re done experimenting. Leftover resources can cost money. -

Secure your credentials: Never commit AWS access keys or

terraform.tfstatecontaining sensitive data to Git. Use IAM roles or IAM users with limited permissions for Terraform.

Terraform in DevOps and Further Learning

Terraform is a cornerstone tool in modern DevOps workflows. By codifying infrastructure, it aligns perfectly with DevOps principles – enabling version control, peer review, automated testing, and continuous delivery of not just application code, but infrastructure too. As one summary notes, using Terraform “ensures consistency and repeatability” in deployments, which is at the heart of DevOps best practices.

Many DevOps courses and training programs now include Terraform as a core skill. For example, a Bangalore-based DevOps curriculum explicitly lists Terraform as the infrastructure tool that lets you “build and manage servers, networks, and storage automatically”. Hands-on experience with Terraform (e.g. through projects or lab exercises) is often what employers expect for junior DevOps roles.

Terraform with AWS: Conclusion and Next Steps

Terraform with AWS empowers you to manage cloud infrastructure with code, making deployments fast, reliable, and scalable. You’ve learned the basics: defining providers and resources, running terraform init/plan/apply, and even cleaning up with terraform destroy. As you practice, try extending your configurations – add networking components (like VPCs), use variables and outputs to make your code dynamic, and explore Terraform with AWS modules for reuse.

If you’re serious about mastering Terraform and the broader AWS DevOps ecosystem, structured learning can help. For instance, Sashiraj SmartTech – one of the best DevOps training institutes in Bangalore – offers an AWS with DevOps course that covers Terraform with AWS along with other key DevOps tools. Their program includes hands-on labs, projects, and certification guidance, making it ideal for students and junior engineers (whether in India or studying abroad) who want a comprehensive DevOps education. Learn more about the AWS with DevOps Course by Sashiraj SmartTech to deepen your skills and advance your DevOps career.

Ready to level up? Enroll in a top-rated DevOps course in Bangalore today and get expert guidance on Terraform, AWS, and other essential DevOps technologies. Start building cloud infrastructure faster and smarter – and take a step toward becoming a proficient DevOps engineer!